KVM Setup

I original setup my main server and virual machines all with Ubuntu. However I have started using Debian and find it leaner than Ubuntu. I am slowly moving my various servers and virtual machines to Debian.

Basically to install the KVM Hypervisor: sudo apt install qemu-kvm qemu-system qemu-utils libvirt-clients libvirt-daemon-system virtinst bridge-utils bridge-utils is optional.

genisoimageis a package to create iso imageslibguestfs-toolsis a library to access and modify VM disk imageslibosinfo-binis a library with guest operating systems information to assist VM creation

Use the built-in clone facility: sudo virt-clone --connect=qemu://example.com/system -o this-vm -n that-vm --auto-clone.

To list all defined virtual machines virsh list --all.

Which will make a copy of this-vm, named that-vm, and takes care of duplicating storage devices.

To dump a virtual machine xml definition to a file: virsh dumpxml {vir_machine} > /dir/file.xml.

Modify the following xml tags:

- <name>VM_name</name> be careful not to use an existing VM name, running, paused or stopped.

- <uuid>869d5df0-13fa-47a0-a11e-a728ae65c86d</uuid>. Use

uuidgento generate a new unique uuid. - Change as required the VM source file: <source file='/path_to/vm_image_file.img'/>

- <mac address='52:54:00:70:a9:a1'/> Cant be the same on the same local network….

To convert the xml file back to a virtual machine definition: sudo virsh define /path_to/name_VM_xml_file.xml.

The VM xml file can be edited directly using the command virsh edit VM_name

To get virtual image disk information sudo qemu-img info /path_to/name_VM_file.img

A compacted qcow2 image can be created using the following command: sudo qemu-img convert -O qcow2 -c old.qcow2 new_compacted_version_of_old.qcow2

Copy to New Server

I created a new Debian server on the same hardware as the original Ubuntu server. Only one server could be running at a time using EUFI boot options. I ran into the following problems.

- Backed up copies of the VMs when they were stopped.

- Some filing and naming clean-ups.

- The VMs are stored on a separate drive, not the server root drive, and backed up to another separate drive.

- The VM xml files were exported to the back-up drive, same directory as the VM back ups.

- Using the same host name and IP address caused a SSH key error. To solve the new machine with 2 IP addresses the original server address and the a separate new unique address.

- The original IP address allowed the VM NFS to work without any configuration changes, so remains working on both the new and old servers.

- The new unique secondary address allows ssh access to the new server without needing to change the ssh keys. Once the original server is permanently decommissioned the secondary IP address would not be required.

- When attempting to start the old VMs on the new server there was an error with the machine type. The command

kvm-spice -machine helpshows the allowable configurations on the current KVM server. Simply changing the machine value to one listed from kvm-spice in <type arch=“x86_64” machine=“pc-i440fx-5.2”>hvm</type> corrected this problem.

Windows10 on KVM

I have not used Windows on a VM now since circa 2021. Just no need. I do have a dual boot on my main desk top that I default to Debian testing and can boot to Windows 11 when I need to use Windows based software. My sons all still use Windows exclusively on their computers and game consoles….. So I still have a family MSOffice 365 subscription. This give access to MSoffice and 1TB of MS Cloud each. I had poor performance on Windows 7, 8/8.1, and 10 running on KVM a few years back. A large frustration was that I could not seem to get more than 2 CPUs functioning on the Windows VM even though I assigned 4. Performance was very poor, with CPU usage usually saturated with any use and relatively high even when idle. I found out early that Windows has limitations on the number of CPUs that could be used; 1 on Home, 2 on professional and 4 on Workstation and more on Server versions, at least that was my understanding. As I did not have a great need for the Windows VM I did not try too hard and basically did not use.

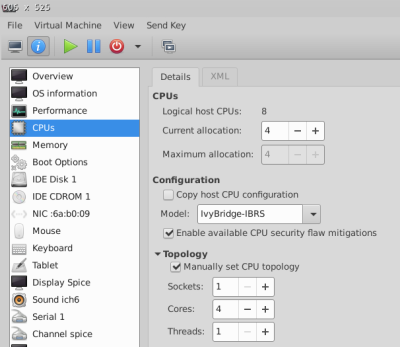

What I recently discovered was that this Windows OS limitation was not on the number of logical CPUs, but rather on the number of sockets configured. Further to this KVM allows for configuration of socket|Cores|Threads. See the picture below. This actually makes sense for limitations on the number of sockets on a paid OS. So there seems to be no limit on the number of cores and threads, only the number of sockets. Sadly, the default KVM topology setup is to assign all the virtual CPUs as sockets with 1 core(/socket) and 1 Thread(/core). When setting the manual CPU topology option to 1 Socket with 4 Cores(/Socket) and 1 Thread(/Core) my Windows 10 could see the 4 cores and performance increase dramatically. Upon further use I seemed to get best performance with 6 cores for the Windows VM. It is basically usable now.

BTW my server hardware configuration is: 1 Socket, 8 Cores (/Socket) & 1 Thread(/Core)

DESKTOP-M41KNMA

My understanding is that Windows Professional only allows one user to be actively logged in at any time either locally or remotely. This limitation was never a concern for me.

KVM Backup

There seems to be 3 main ways to backup a KVM virual machine:

- Shutdown the VM first and then copy the file. Start the VM again after the copy is completed.

- Use virsh backup-begin command to allow live full backup

- Live backup using snapshot

- Create a snapshot of the VM and direct all changes to the snapshot allowing safe backup of main VM file

- Active back commit the snapshot and verify back commit worked

- Copy the main file(s) while the VM is running

- Not recommended, as file corruption will probably occur as the VM operation may and probably will modify the file during the copy process

KVM Offline Backup

Note this only works on VMs that are shut down

sudo virsh list –allto list all KVM virtual machines.sudo virsh dumpxml VM_name | grep -i "source file"to list the VM source file location noted in the VM XML file.sudo virsh dumpxml vm-name > /path/to/xm_file.xmlto archive/backup the VM XML definition file.sudo cp -p /working/path/VM_image.qcow2 /path/to/to archive/move the VM file.sudo virsh undefine vm-name --remove-all-storageto undefine the VM and remove its storage. (Be careful with this one!)sudo virsh define –file <path-to-xml-file>to import (define) a VM from an XML file.

References:

kvm back-up links

- Backup and Restore KVM VMs - Shutdown VM method

- Backula Technical Considerations of a KVM Backup Process - Shutdown VM method

—-

KVM Cheat Sheet

There are perhaps too many of these I will keep this list very short and simple with the most useful options.

sudo virsh nodeinfo: Virsh display node informationsudo virsh list --all: Virsh List all domains, the--alloption ensure inactive domains are listedsudo virsh dominfo domain-name: List information of domaindomain-nameor domaindomain-idsudo virsh domiflist domain-name: List network interface(s) information of domaindomain-nameor domaindomain-idsudo virsh domblklist domain-name: to locate the file of an existing VM of domaindomain-nameor domaindomain-idsudo virsh domrename currentname newname: To rename domain

sudo virsh dumpxml domain-name > /dir_tree/kvm_backup/domain-name.xml: To copy the domain definition ofdomain-nameto xml filesudo virsh dumpxml domain-namewill list the xml domain definition ofdomain-name

virsh define --file /dir_tree/kvm_backup/domain-name.xml: To restore a VM definition from a xml file. The file is normal one created fromvirsh dumpxmlanddomain-nameis given in this definition filesudo virsh pool-list: List storage poolssudo virsh vol-list --pool pool-name: List volumes inpool-name

sudo virsh undefine domain-name: To remove the VM definition- virsh : start, shutdown, destroy, reboot, or pause (suspend / resume)

sudo virsh start domain-name: To start a virtual machine of domaindomain-namesudo virsh shutdown domain-name: To shutdown a VM ofdomain-nameordomain-id(initiate a shutdown now in VM, could take some time to actually stop)sudo virsh destroy domain-name: To destroy a VM ofdomain-nameordomain-id(effectively power down VM, force off, could corrupt a working VM)sudo virsh reboot domain-name: To reboot (shutdown and restart) a VM ofdomain-nameordomain-idsudo virsh suspend domain-name: To suspend or pause and operating VM ofdomain-nameordomain-id, all cpu, device and i/o are paused. But VM remains in operating memory ready for immediate resume / un-pausesudo virsh resume domain-name: To resume / unpause a suspended / paused VM ofdomain-nameordomain-id

man virsh --help: virsh helpman virsh list --help: virsh list specific help

Where:

- VM = Virtual Machine

Notes:

- Only running VMs are given a numerical Id

KVM QEMU Commands

Change the Disk Allocated Size

How to change the amount of disk space assigned to a KVM *How to Resize a qcow2 Image and Filesystem with Virt-Resize

- First, turn off the virtual machine to be checked

- Next find the file location of the virtual machine to be checked

- Next query the file:

sudo qemu-img info /path_vm/vm_name.img - Next increase the allowed size of the vm disk:

sudo qemu-img resize /path_vm/vm_name.img +20G - We need to make a backup of the VM disk:

sudo cp /path_vm/vm_name.img /path_vm/vm_name_backup.img - We can check the file system on the VM:

virt-filesystems --long -h --all -a /path_vm/vm_name.img, we can also use this to confirm the correct partition to expand. - We the backup VM disk to create a new expanded drive:

sudo virt-resize --expand /dev/sda1 /path_vm/vm_name_backup.img /path_vm/vm_name.img

The virt-filesystems command may not be installed by default and can be installed with the following sudo apt install guestfs-tools

Shrink the Disk File

- Locate the QEMU disk file

- Shut down the VM.

- Copy the VM file to a back-up:

cp image.qcow2 image.qcow2_backup

- Option #1: Shrink your disk without compression (better performance, larger disk size):

qemu-img convert -O qcow2 image.qcow2_backup image.qcow2

- Option #2: Shrink your disk with compression (smaller disk size, takes longer to shrink, performance impact on slower systems):

qemu-img convert -O qcow2 -c image.qcow2_backup image.qcow2

Example: A 11GB disk file I shrank without compression basically remained unchanged at 11GB, but with compression to 5.2GB. Time to compress was longer and dependent upon the hardware used.

- Boot your VM and verify all is working.

- When you verify all is well, it should be safe to either delete the backup of the original disk, or move it to an offline backup storage.

How to mount VM virtual disk on KVM hypervisor

There seem to be a number of methods to do this.

In all cases the VM (Virtual Machine) must be in shutdown state.

libguestfs method

A method is to use the tool set libguestfs however it is very heavy with many dependencies, so I have decided not to pursue this option.

Mount a qcow2 image directly

To check if already installed or not: sudo apt list --installed | grep qemu-utils

To install sudo apt install qemu-utils

The nbd (network block device) kernel module needs to be loaded to mount qcow2 images.

sudo modprobe nbd max_part=16will load it with support for 8 block devices. (If more blocks are required use 16, 32 or 64 as required.)- Check VMs

sudo virsh list --all.- If the VM to be mounted is active shutdown with

sudo virsh shutdown <domain-name or ID>.

- Use

sudo virsh domblklist <domain-name>to get the full path and file name to the VM image file. - Use

ls -l /dev/nbd*to check if any devices named nbd have already been defined. - Use

sudo qemu-nbd -c /dev/nbd0 <image.qcow2>to create the block devices on the VM. sudo fdisk /dev/nbd0 -lwill list the available partitions in /dev/nbd0.- Use

sudo partprobe /dev/nbd0to update the kernel device list. - Use

ls -l /dev/nbd0*to see available partitions on image. - If the image partitions are not managed by LVM they can be mounted directly.

- If a mount point does not already exist, create:

sudo mkdir /mnt/image. - The device can then be mounted

sudo mount /dev/nbd0p1 /mnt/imageorsudo mount -r /dev/nbd0p1 /mnt/imageto mount read only orsudo mount -rw /dev/nbd0p1 /mnt/imageto mount explicitly read-write.

When complete clean-up with the following commands.

- Unmount the block device with

sudo umount /mnt/image - Delete the network block device with

sudo qemu-nbd -d /dev/nbd0. - If required the VM can be restarted with

sudo virsh start <domain-name>

Mount a qcow2 image with LVM

Links:

KVM Guest Corrupted - Recovery Options and Related

-

sudo modprobe nbd max_part=8to enable the nbd (network block device) kernel module on hostsudo qemu-nbd --connect=/dev/nbd0 /mnt/kvm/VMname.qcow2to use qemu-nbd to connect your qcow2 file as a network block devicesudo fdisk /dev/nbd0to help with finding partitions on the VM file, qcow2sudo fsck /dev/nbd0p1to fix the corrupted disk on vmsudo qemu-nbd --disconnect /dev/nbd0to disconnect the disk network block device

- Qemu-discuss How to fix/recover corrupted qcow2 images

Some Keypoints are:

sudo virshto get into virsh, the virtualisation interactive terminal. Once inside virsh:listto list running VMs, orlist --allto list all defined VMs, running or shutdownedit VM_nameto edit the XML configuration file of the VM names VM_name.sudo virsh define XXX.xmlto add a vm (XXX.xml)into virsh persistently. The VM is not started. The vm xml definition files can be found in/etc/libvirt/qemu.sudo virsh start VM_nameto start the VM. (Also reboot, reset, shutdown, destroy)

sudo virsh help | lesslist all the virsh commands

Some links: